Real-Time Computer Vision Based MIDI Pipeline

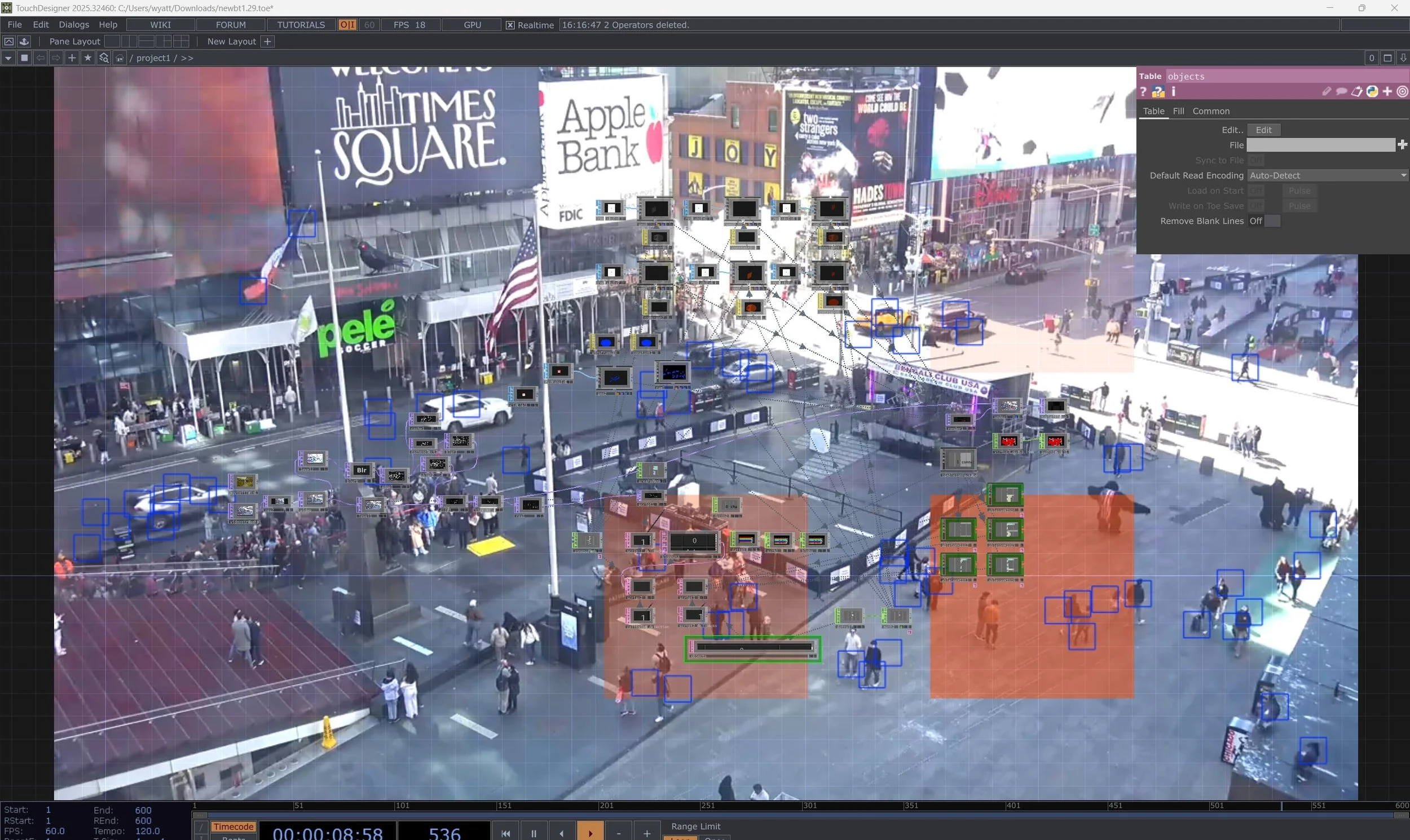

This project explores the use of human motion derived from surveillance as a generative input to create sound and music. The video above is running in realtime off of a live webcam stream.

I created custom object detection using OpenCV, handled object and collision logic with Python scripts, all inside TouchDesigner which was also used to render the scene. TDAbleton nodes enabled MIDI data routing to Ableton instruments. VB-Cable acts as a virtual audio interface to bring audio back into TouchDesigner to create a final output.

Inspired by Matthew Wilcock, @matthewsinstagram